The gap between imagination and execution has always been a quiet barrier in visual storytelling. You may have a compelling photo, a clear idea, and even a strong emotional direction—but turning that into motion used to require editing software, timelines, and technical skill. What I found interesting while exploring Image to Video AI is that the process is no longer about editing frames. It is about describing intent.

That shift changes who gets to create moving images. It also changes how quickly ideas can be tested, discarded, and refined.

Why Static Visuals Are No Longer Enough

There was a time when a single image could carry attention. That time is fading.

Attention Now Requires Motion

Short-form video platforms have redefined baseline expectations. A static image now feels incomplete in environments built around movement.

Production Friction Still Exists

Traditional video production introduces friction:

- Editing timelines

- Motion keyframes

- Rendering pipelines

These are not just tools—they are barriers for non-editors.

AI Changes the Starting Point

Instead of asking “how do I animate this,” the question becomes:

- What should move?

- How should it feel?

That shift is subtle but important.

How The System Interprets Creative Intent

What stands out is not just generation—but interpretation.

From Description To Motion Logic

Language Becomes Instruction

In my testing, phrases like:

- “slow cinematic zoom”

- “gentle wind movement”

- “dramatic lighting shift”

are translated into actual motion patterns.

This suggests the system is mapping language to visual behaviors rather than applying fixed templates.

Image As Structural Anchor

The uploaded image is not just input—it defines:

- composition

- subject placement

- visual hierarchy

Everything else adapts around it.

Model Selection Matters

Different models (such as cinematic vs stylized outputs) appear to influence:

- motion smoothness

- realism

- interpretation consistency

The system does not expose technical parameters, but the variation is noticeable.

How The Workflow Actually Feels In Practice

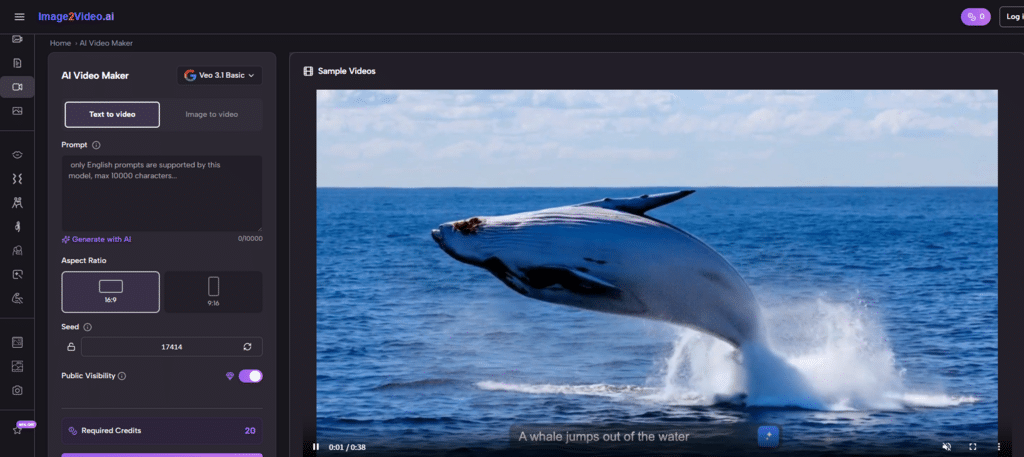

The official flow is minimal, and in practice it stays that way.

Three Steps From Idea To Output

Step 1 Upload A Base Image

You start with a JPEG or PNG. This defines the entire scene.

Step 2 Add A Prompt Description

You describe motion, atmosphere, and style using natural language.

Step 3 Generate And Wait

The system processes the request, typically taking a few minutes, and returns a video output.

There are no intermediate editing steps. That is both the strength and limitation.

Comparing This Approach With Traditional Methods

| Aspect | AI-Based Generation | Traditional Editing |

|---|---|---|

| Entry Barrier | Very low | High |

| Time To Output | Minutes | Hours |

| Control Precision | Medium | High |

| Learning Curve | Minimal | Steep |

| Iteration Speed | Fast | Slow |

This comparison is not about replacement. It is about workflow differences.

Where This Approach Feels Most Natural

Not every use case benefits equally.

Social Content Creation

Short videos, especially those built around mood or reaction, benefit from:

- speed

- experimentation

- variation

Concept Visualization

When exploring ideas:

- you can test multiple directions quickly

- discard weak ones early

Personal Storytelling

Turning old photos into motion sequences feels less like editing and more like reinterpreting memory.

Where Limitations Still Appear

No system removes trade-offs.

Prompt Dependency

Results vary significantly based on how clearly intent is described.

Inconsistent Motion Detail

In some outputs, fine motion details can feel slightly artificial.

Iteration Required

Getting a precise result often requires multiple attempts.

These are not flaws unique to this system—they are typical of current generative pipelines.

What This Signals About The Direction Of Creation

The most interesting shift is not speed. It is abstraction.

From Tools To Intent Layers

Instead of manipulating:

- frames

- keyframes

- transitions

you describe:

- mood

- movement

- pacing

The system fills in the mechanics.

A Different Kind Of Creative Skill

The skill is no longer technical execution. It becomes:

- describing clearly

- refining prompts

- understanding visual language

Where Photo To Video Fits In This Transition

Later in my testing, I explored the broader idea of Photo to Video workflows—not as a feature, but as a category. The key difference is that the transformation is not linear editing. It is interpretive generation.

That distinction matters. It suggests future tools may rely less on manual control and more on expressive input.

What This Means For Creators Moving Forward

The implication is not that traditional tools disappear.

It is that:

- early-stage ideation accelerates

- experimentation becomes cheaper

- more people can participate in visual storytelling

For many creators, the first version of an idea may no longer be edited. It may be generated.

And that alone changes how creative processes begin.