The traditional pipeline for a product launch video is a sequence of slow-moving bottlenecks. It begins with a creative brief, moves into storyboarding, transitions to a week of location scouting or studio booking, and eventually culminates in a post-production cycle that can stretch for another month. For a product team operating in a high-velocity market, this timeframe is often incompatible with the reality of shifting specs and rapid consumer feedback.

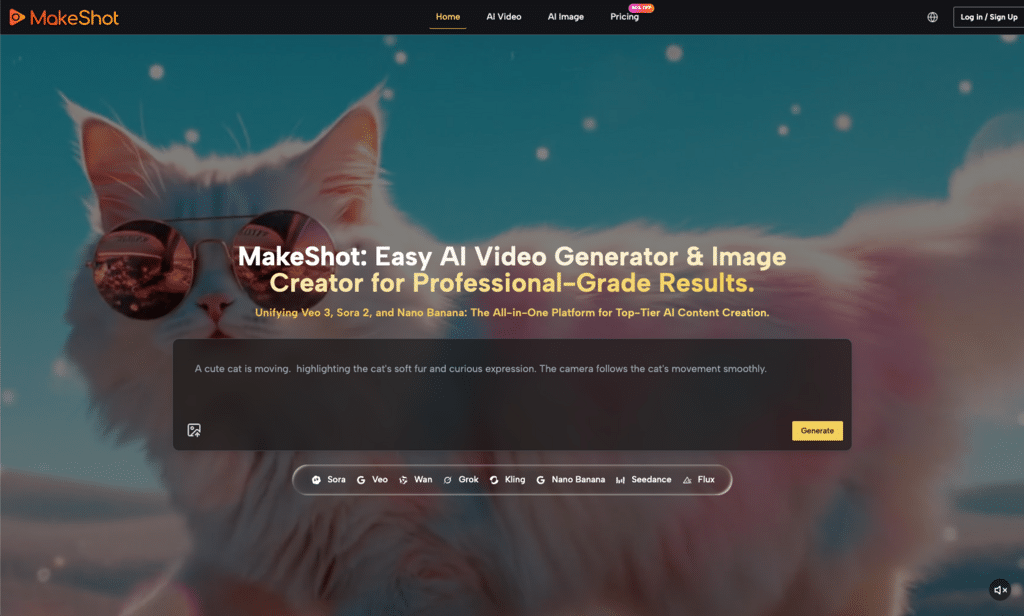

In recent months, the introduction of the AI Video Generator into professional workflows has changed the internal math for these teams. We are no longer looking at a binary choice between “expensive video” and “no video.” Instead, the conversation has shifted toward how we can use an AI Video Generator to stress-test creative concepts before a single dollar is spent on a physical shoot—or, in many cases, to replace the shoot entirely.

The Performance Marketing Bottleneck

Performance marketing relies on volume and iteration. To find a winning ad creative, you often need to test twenty different hooks, three different background settings, and five different lighting styles. Under the old model, producing these variations was cost-prohibitive. You picked one “best guess” and hoped it converted.

By integrating an AI Video Generator into the early stages of ad creation, marketers can now move from a static image to a moving asset in roughly sixty seconds. This isn’t just about speed; it is about the ability to fail faster. If a specific product angle doesn’t resonate in a generated draft, it is discarded immediately, rather than being discovered as a failure after a $50,000 production cycle.

Evaluating Model Diversity for Product Fidelity

One of the most significant misunderstandings about using an AI Video Generator is the “one-size-fits-all” trap. Professional creators realize that different underlying models—such as Google Veo, Kling, or Runway—handle physics and textures differently.

For instance, if you are launching a luxury watch, you need a model that excels at specular highlights and metallic reflections. If you are launching a beverage, the fluid dynamics of a pour are the primary concern. A robust platform allows an operator to toggle between these models to see which engine renders the product’s specific attributes with the least amount of “hallucinated” distortion. It is here that we encounter our first moment of limitation: no AI Video Generator currently handles complex fluid-to-container physics with 100% accuracy every time. There is often a “uncanny valley” effect in liquid motion that requires multiple reruns or “seeds” to get a usable clip.

The Workflow: From Static Asset to Kinetic Ad

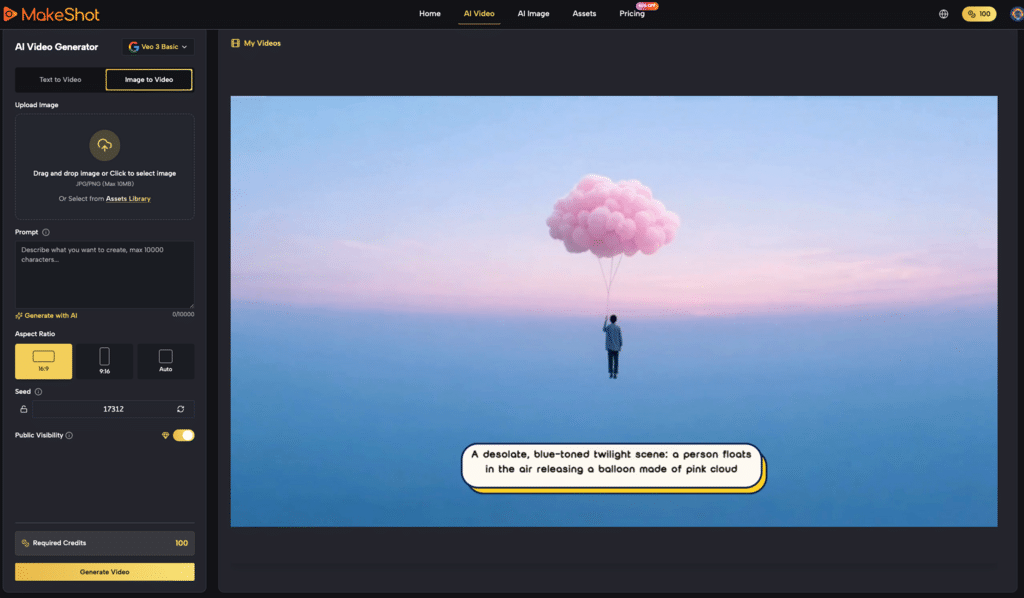

The most practical application for product teams isn’t text-to-video; it is image-to-video. When you have a high-resolution render of your product, you can use an AI Video Generator to animate it. This ensures the product itself remains consistent while the environment around it changes.

- Seed Selection: Start with a clean, high-fidelity image of the product.

- Motion Prompting: Define the camera move (e.g., a slow orbital pan or a top-down reveal).

- Iteration: Use the AI Video Generator to create four variations of that movement.

- Refinement: Select the most stable render and use it as the foundation for your social media hooks.

This workflow drastically reduces the cognitive load on the creative team. Instead of wondering how a product might look in a specific lifestyle setting, they can simply see it. This “show, don’t tell” approach speeds up internal approvals, as stakeholders are no longer squinting at charcoal-sketched storyboards.

Testing the Limits of Visual Consistency

A recurring challenge for any product team is maintaining brand consistency. An AI Video Generator is essentially a probabilistic engine; it predicts the next frame based on patterns. This means that “brand colors” can sometimes drift. A specific shade of navy blue might lean toward teal in one render and charcoal in another.

This is where the skepticism of a “benchmark-driven” approach is necessary. We cannot yet trust an AI Video Generator to perfectly replicate a specific Hex code across a 10-second sequence without some post-production color grading. Marketing leads must accept that these tools are best used for “vibe” and “motion” while the final brand polish may still require a human touch in a traditional editing suite. This limitation is a necessary trade-off for the exponential increase in output volume.

Managing the “AI Feel” in Ad Creatives

There is a specific cadence to AI-generated movement—often characterized by perfectly smooth, almost weightless camera pans—that savvy consumers can identify. To avoid this “AI feel,” operators are learning to prompt for imperfections. Adding keywords like “handheld camera shake,” “slight lens flare,” or “natural lighting” helps ground the output.

The goal for a product launch isn’t necessarily to trick the viewer into thinking the video was shot on an Arri Alexa, but rather to remove the friction of the medium. When an AI Video Generator is used correctly, the viewer focuses on the product’s value proposition rather than the novelty of the technology used to create the ad.

Cost-Efficiency and the “Good Enough” Threshold

For 90% of social media advertising, the “Good Enough” threshold is lower than most creative directors are willing to admit. On a platform like TikTok or Instagram, where the lifespan of an ad creative might be forty-eight hours, the ROI on a high-end studio production is often negative.

By contrast, an AI Video Generator allows a team to produce a week’s worth of content for the cost of a single lunch. This shift in the economics of production allows teams to spend more of their budget on distribution and media buying. Instead of spending 80% of the budget on production and 20% on ads, they can flip the script. This is the most compelling argument for the tool-savvy marketer: the decentralization of production.

The Role of Human Prompt Engineering

We often hear that AI replaces the creator, but in the context of a high-stakes launch, the AI Video Generator is simply a more powerful brush. The “human in the loop” is more important than ever. A prompt that reads “product video for a sneaker” will produce a generic, unusable mess. A prompt that reads “low-angle tracking shot, cinematic lighting, 8k, bokeh background, urban sunset environment, realistic textures” requires an operator who understands cinematography.

Product teams need to treat the AI Video Generator as a junior cinematographer. You have to give it clear directions, correct its mistakes, and sometimes fire it and start over. The “no-nonsense” reality is that you will likely discard 70% of what the generator produces. The win is that the 30% you keep is still cheaper and faster than the old way.

Where the Technology Currently Stalls

It is important to reset expectations regarding text rendering and complex human interactions. If your product launch involves a person unboxing a device and interacting with a touch screen, an AI Video Generator will likely struggle. The fine motor skills—fingers touching specific points on a screen—often result in “mashing” or anatomical glitches.

For these high-complexity shots, the best strategy is a hybrid one. Use the AI Video Generator for the sweeping atmospheric shots, the product close-ups, and the lifestyle backgrounds. Use traditional filming for the specific “human interaction” moments that require 100% anatomical accuracy.

Integrating AI into the Creative Operations Pipeline

For organizations looking to scale, the AI Video Generator should not be a “toy” used by one person. It needs to be integrated into the Creative Operations (CreativeOps) workflow. This means creating a library of “proven prompts” that match the brand’s visual identity.

When a new product variant is launched, the team shouldn’t start from scratch. They should pull the “Luxury Cinematic” prompt template, swap the product image, and generate the new assets. This systematized approach is what separates the hobbyists from the professional agencies who are currently winning the attention economy.

Practical Advice for the Next Campaign

If you are a product lead or a marketer looking to start, don’t aim for a 60-second masterpiece. Start with a “Motion Poster.” Take your best product shot, put it into an AI Video Generator, and add a simple “dolly-in” motion. Use that as a background for a 6-second YouTube pre-roll.

Once you see the lift in engagement from even that small bit of motion, the value of the tool becomes undeniable. The future of product launches isn’t about the “one big video”; it’s about the thousand small variations that find the right customer at the right time.

The Shift from Production to Curation

In this new era, the role of the creative director shifts from “managing a shoot” to “curating a feed.” When you have an AI Video Generator capable of producing hundreds of clips an hour, the skill lies in selecting the three that actually communicate the brand’s soul.

This requires a move away from the “perfectionist” mindset toward an “experimentalist” one. The uncertainty of what the AI will produce is no longer a bug; it is a feature that allows for happy accidents—visual combinations that a human storyboarder might never have imagined. As long as you maintain a visible caution regarding the tool’s limitations, the AI Video Generator is currently the most significant leverage point in a marketer’s toolkit.